[Update May 7, 2019: Giggle Score has been updated to use 7-zip to benchmark the boards instead of sysbench, and the “best value” rankings are now quite different]

People like to compare single board computers, and usually want to have a simple answer as to which is better than the others. But in practice it’s impossible, because the beauty of SBCs is that they are so versatile and can be used in a wide variety of project, and that means in some cases the “best board” may be completely useless to you since it lacks a critical feature and interface for YOUR project be it H.265 video encoding or a MIPI DSI display interface.

Still, it’s still always fun to look at benchmark scores and trying to compare SBCs, and for projects that mostly require CPU processing power it may also be useful. Robbie Ferguson has been developing and maintaining NEMS (Nagios Enterprise Monitoring Server) Linux for single board computers which runs an after-hours benchmark once per week and logs the server’s score anonymously and securely meaning he has a database with benchmark of hundreds boards running NEMS, mostly of which are Raspberry Pi 3 Model B/B+ boards.

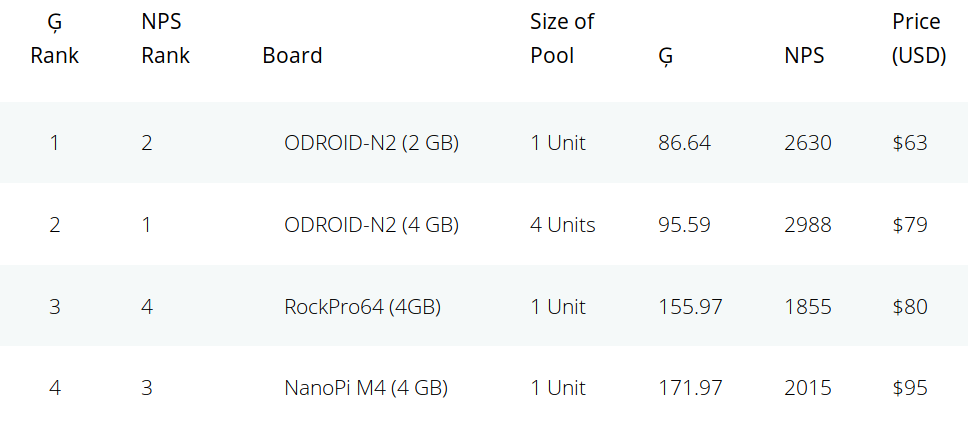

The NEMS Performance Score (NPS) is then weighted by the selling price of the board to derive the Giggle Score providing a list of the boards with the best value. As the name implies, you may not want to take it too seriously but the results are in and Amlogic S922X based ODROID-N2 board with 2GB RAM is the board with best value, followed by the 4GB RAM version, and RockPro64 (4GB) comes in third.

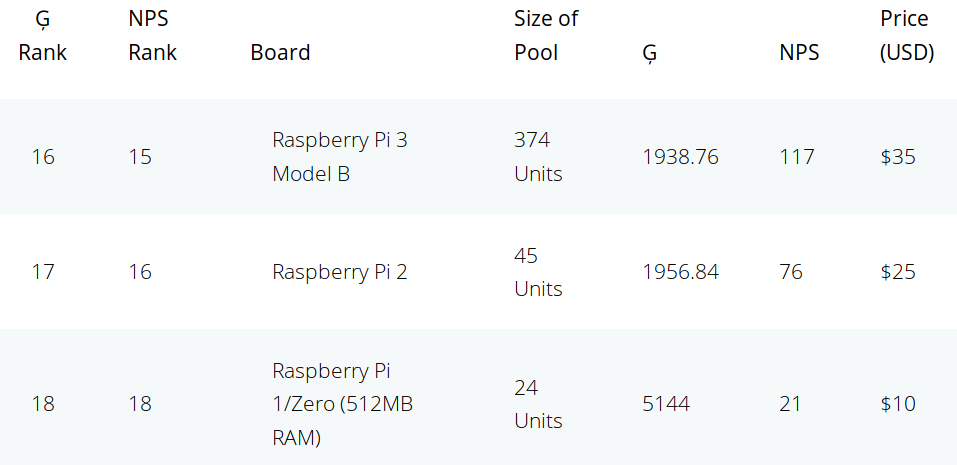

At the other end of the ranking, the three boards with the worst value are all coming from the Raspberry Pi Foundation with Raspberry Pi 3 model B, Raspberry Pi 2, and Raspberry Pi 1/Zero being dead last.

If networking monitoring is indeed a low CPU usage task, the Raspberry Pi Zero may ironically be the best value at $10 for running NEMS Linux, as all the processing power potentially delivered by ODROID-N2 may be just be wasted since the system may be idled at most times. I’d assume it all depends on the size of your network.

Jean-Luc started CNX Software in 2010 as a part-time endeavor, before quitting his job as a software engineering manager, and starting to write daily news, and reviews full time later in 2011.

Support CNX Software! Donate via cryptocurrencies, become a Patron on Patreon, or purchase goods on Amazon or Aliexpress